Real-Time Streaming Systems

Batch is a special case of streaming. When your business needs to react in seconds instead of hours, you need an architecture built for continuous data flow.

When You Need This

Your dashboards are stale by the time anyone looks at them. Fraud detection runs as an overnight batch job, catching fraud the next morning. Inventory counts are updated hourly, causing overselling. Sensor data is collected but not acted on until it's analyzed in a nightly ETL. You need a system where data flows continuously from sources through processing to consumers with sub-second latency — real-time analytics, live notifications, streaming AI inference, and instant synchronization between systems.

Pattern Overview

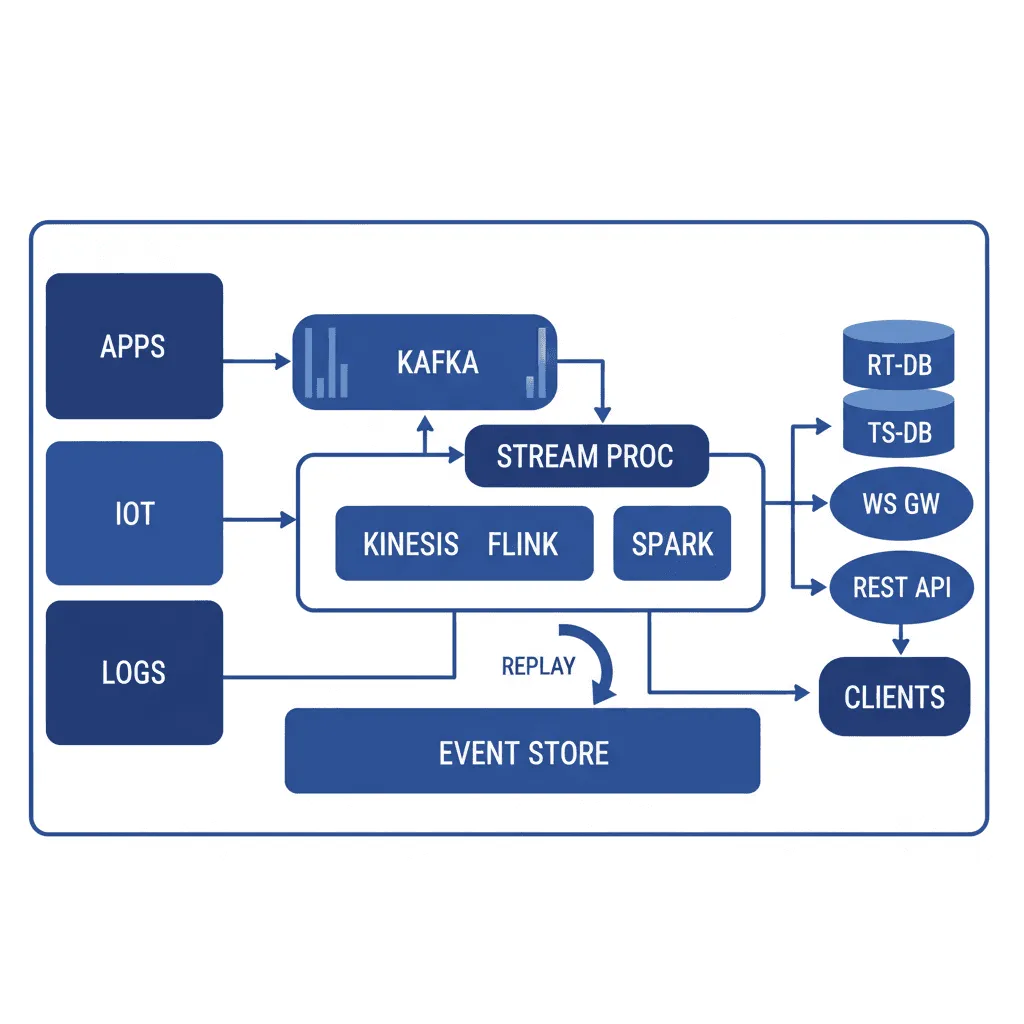

Real-time streaming architecture processes data as a continuous, unbounded flow rather than discrete batches. Event producers publish to a streaming platform (Kafka, Kinesis, Pulsar). Stream processors (Flink, Kafka Streams, custom consumers) transform, enrich, filter, and aggregate events in-flight. Processed results are pushed to consumers: real-time dashboards (WebSocket), search indices (Elasticsearch), analytics databases (ClickHouse), and downstream services. Change Data Capture (CDC) enables existing databases to participate as event sources without application changes.

Reference Architecture

The architecture has four layers. Event sources produce data — application events, database CDC streams, IoT telemetry, user clickstreams, external API webhooks. The streaming platform (Kafka) provides durable, ordered, replayable event storage. Stream processors consume from topics, apply transformations (filtering, enrichment, windowed aggregation, joins), and produce to output topics or sinks. Consumers subscribe to processed streams — WebSocket servers push to browsers, connectors sink to databases, alerting engines evaluate rules and fire notifications.

- Streaming Platform (Kafka): Multi-broker cluster with topic-per-event-type organization. Partitioned for parallelism (partition key = entity ID for ordering guarantees). Retention configured per topic — 7 days for operational events, 30+ days for audit/replay. Schema Registry (Confluent or Apicurio) enforces event schema compatibility across producers and consumers

- Change Data Capture: Debezium connectors capture row-level changes from PostgreSQL, MySQL, or MongoDB and publish them as events to Kafka. This turns existing databases into event sources without modifying application code — essential for incremental migration to event-driven architectures

- Stream Processing Engine: Apache Flink for complex event processing — windowed aggregations, stream-stream joins, pattern detection. Kafka Streams for simpler transformations that don't need a separate processing cluster. Custom Node.js/Python consumers for lightweight event handling

- Real-Time Delivery: WebSocket server (Socket.io, native WS) for pushing live updates to browser clients. Server-Sent Events (SSE) for one-directional streaming. GraphQL Subscriptions for type-safe real-time queries. Fan-out architecture that decouples producer throughput from consumer connection count

Design Decisions & Trade-offs

System Architecture Overview

Technology Choices

| Layer | Technologies |

|---|---|

| Streaming | Apache Kafka (MSK, Confluent), Kinesis, Apache Pulsar, Redpanda |

| CDC | Debezium, AWS DMS, Maxwell |

| Processing | Apache Flink, Kafka Streams, Benthos, custom consumers |

| Real-Time Delivery | WebSocket (Socket.io), SSE, GraphQL Subscriptions |

| Analytics | ClickHouse, Apache Druid, Elasticsearch, TimescaleDB |

| Observability | Kafka lag monitoring (Burrow), Flink metrics, custom latency tracking |

When to Use / When to Avoid

| Use When | Avoid When |

|---|---|

| Business decisions need sub-second data freshness (fraud, monitoring, trading) | Batch processing with hourly/daily freshness meets the business need |

| Multiple consumers need the same event stream (fan-out, decoupled systems) | You have a single producer and single consumer — a simple queue suffices |

| You need event replay for debugging, reprocessing, or building new consumers | The data volume is low (< 1K events/min) and doesn't justify streaming infrastructure |

| CDC is needed to sync existing databases to downstream systems without code changes | The team lacks experience with distributed systems — streaming adds significant operational complexity |

Our Approach

MW designs streaming systems with the "replay principle" — every stream should be replayable from a point in time, enabling new consumers to backfill historical data and existing consumers to reprocess after bug fixes. Our Kafka deployments include schema evolution policies (backward-compatible by default), consumer lag alerting (before it becomes a business-visible delay), and dead-letter topics with automated retry. We've built streaming pipelines processing 500K+ events/second for video analytics, IoT telemetry, and real-time dashboards.

Related Blueprints

- Real-Time AI Video Surveillance System — Live video event streaming with real-time inference

- Live Sports Highlight Generator — Real-time event detection and highlight extraction

- Connected Fleet Management System — Vehicle telemetry streaming with geofencing

- Supply Chain Visibility Platform — Real-time supply chain event tracking

Related Case Studies

- AI Surveillance — RTSP Streaming — Real-time RTSP video stream processing with event detection

- Video Analysis — Live video analytics with streaming inference pipelines

- Video Encoding — AWS Fast Channel HLS/SRT streaming infrastructure

Related Architecture Patterns

Explore more design patterns and system architectures

Data-Intensive Platform Architecture

When your competitive advantage is in your data, the platform that collects, transforms, stores, and surfaces that data is the most important thing you'll build.

Security-First Architecture

Security isn't a feature you add after launch. It's an architectural property — either the system was designed for it, or it wasn't.

Serverless-First Architecture

Pay for what you use, scale to zero when you don't, and stop managing servers entirely — but know when the economics stop working.

Frequently Asked Questions

MicrocosmWorks recommends Kafka for teams that need multi-consumer replay, long retention periods, and cross-cloud portability, as its log-based architecture supports unlimited consumer groups re-reading the same data stream independently. Kinesis is the better choice when you want a fully managed service tightly integrated with the AWS ecosystem and your data retention needs are under 7 days with fewer than 10 consumer applications. We evaluate your specific requirements—throughput, retention, consumer patterns, and operational maturity—during our architecture assessment to make the right recommendation.

MicrocosmWorks implements exactly-once semantics through a combination of idempotent producers, transactional consumers, and deduplication layers that use event fingerprints stored in a fast lookup cache like Redis. For Kafka-based systems, we leverage Kafka's built-in transactional API that atomically commits consumer offsets and producer writes, while for custom streaming pipelines we implement the outbox pattern with deduplication at the consumer. We always design consumers to be idempotent as a safety net, so even if the exactly-once mechanism has an edge-case failure, reprocessing an event produces the same result.

MicrocosmWorks typically delivers end-to-end latencies of 50-200ms for streaming pipelines that include ingestion, processing, and sink writing, with sub-10ms achievable for simpler passthrough or filtering workloads using in-memory stream processors like Apache Flink or Kafka Streams. The largest latency contributors are usually network hops, serialization overhead, and sink write batching, which we tune based on your latency-versus-throughput tradeoff preferences. During our architecture design, we set explicit latency SLOs per pipeline stage and build monitoring dashboards that track p50, p95, and p99 latencies in production.

MicrocosmWorks implements schema registries (typically Confluent Schema Registry or AWS Glue Schema Registry) that enforce backward and forward compatibility rules, ensuring that producers can evolve their data formats without breaking existing consumers. We use Avro or Protobuf serialization with explicit schema versioning so every message is self-describing and can be deserialized even if the schema has changed since it was produced. Our CI/CD pipelines include automated schema compatibility checks that block deployments if a proposed schema change would break downstream consumers.

MicrocosmWorks recommends a minimum of 2-3 engineers with experience in distributed systems, stream processing frameworks, and infrastructure automation to maintain a production streaming platform reliably. For companies that do not want to build this expertise in-house, we offer managed streaming platform support at $15-$40/hr where our team handles cluster operations, performance tuning, and incident response while your developers focus on building stream processing applications. We also provide training programs that upskill your existing engineering team on Kafka, Flink, or Kinesis operations over 4-8 week engagements.

Need Help Implementing This Architecture?

Our architects can help design and build systems using this pattern for your specific requirements.

Get In Touch