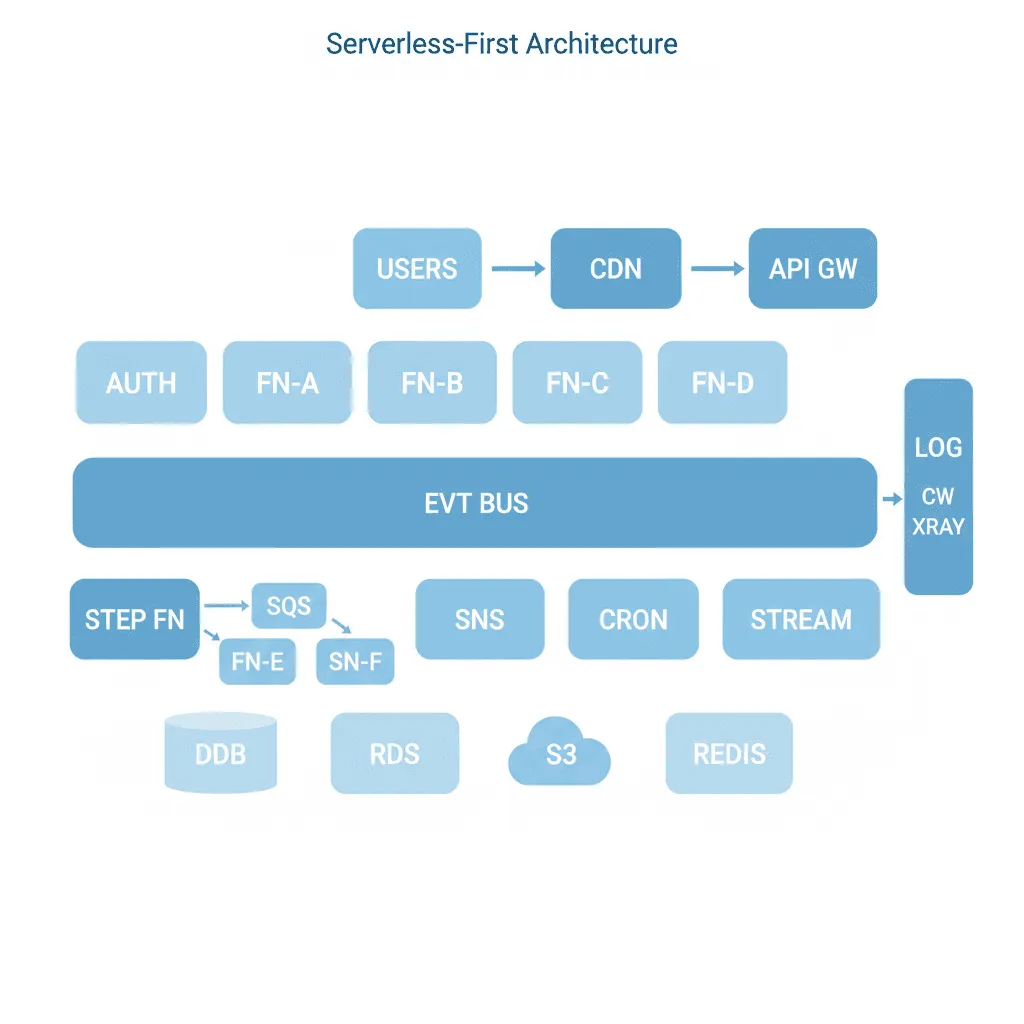

Serverless-First Architecture

Pay for what you use, scale to zero when you don't, and stop managing servers entirely — but know when the economics stop working.

When You Need This

Your application has variable traffic — quiet overnight, spikes during business hours, and unpredictable bursts from marketing campaigns or seasonal events. You're paying for servers that sit idle 70% of the time. Or you're building a new product and don't want to invest in infrastructure provisioning, capacity planning, and on-call rotation before you've validated product-market fit. Serverless gives you per-request pricing, automatic scaling, and zero infrastructure management — but only when the workload characteristics fit.

Pattern Overview

Serverless-first architecture builds applications entirely on managed, scale-to-zero compute services (Lambda, Cloud Functions, Vercel Functions) connected by managed event services (EventBridge, SQS, Step Functions). There are no servers to patch, no clusters to resize, no capacity to plan. Functions execute in response to events (HTTP requests, queue messages, schedule triggers, database changes) and scale automatically from zero to thousands of concurrent instances. The pattern extends to serverless databases (DynamoDB, Neon, PlanetScale), serverless queues (SQS), and serverless orchestration (Step Functions, Temporal Cloud).

Reference Architecture

The architecture is event-driven by nature. An API Gateway (AWS API Gateway, Vercel) routes HTTP requests to individual functions. Event sources (SQS queues, EventBridge rules, S3 notifications, DynamoDB streams) trigger functions asynchronously. Step Functions or Temporal orchestrate multi-step workflows where each step is a function with built-in retry, timeout, and error handling. Serverless databases (DynamoDB for key-value, Neon/PlanetScale for relational) handle storage without capacity management. A strangler fig pattern enables gradual migration from existing monoliths.

- Function Layer: AWS Lambda, Vercel Functions, or Google Cloud Functions. Each function handles one responsibility — one API endpoint, one event processor, one scheduled task. Functions are stateless; any state lives in databases or caches. Cold start optimization through provisioned concurrency (Lambda), Fluid Compute (Vercel), or language choice (Go/Rust for sub-10ms cold starts)

- Event Router: EventBridge for content-based event routing, SQS for simple queue processing, SNS for fan-out to multiple consumers. Events are the integration layer between functions — no function calls another function directly

- Workflow Orchestrator: Step Functions (AWS) or Temporal Cloud for multi-step processes — order fulfillment, document processing pipelines, approval workflows. Each step is independently retryable with configurable timeouts and fallback paths. Visual debugging through step-level execution traces

- API Composition Layer: API Gateway with request validation, throttling, and caching. GraphQL (AppSync) when clients need flexible queries across multiple serverless backends. WebSocket support (API Gateway WebSocket, Vercel) for real-time features

Design Decisions & Trade-offs

System Architecture Overview

Technology Choices

| Layer | Technologies |

|---|---|

| Compute | AWS Lambda, Vercel Functions (Fluid Compute), Google Cloud Functions, Cloudflare Workers |

| API | API Gateway (REST/WebSocket), Vercel, AppSync (GraphQL) |

| Orchestration | AWS Step Functions, Temporal Cloud, Vercel Workflow DevKit |

| Data | DynamoDB, Neon Postgres, PlanetScale, Upstash Redis, S3 |

| Events | EventBridge, SQS, SNS, Vercel Queues |

| Observability | CloudWatch, Datadog (serverless monitoring), Lumigo, X-Ray |

When to Use / When to Avoid

| Use When | Avoid When |

|---|---|

| Traffic is variable with significant idle periods (scale-to-zero saves money) | Traffic is steady and high-volume — reserved instances are 50-70% cheaper at sustained load |

| You want zero infrastructure management and operations overhead | You need persistent connections (WebSocket servers, database connection pools) — though Vercel handles this |

| The application decomposes naturally into event-driven functions | The workload requires > 15 minutes of continuous execution per request |

| You're migrating incrementally from a monolith and want per-endpoint rollout | The team is unfamiliar with distributed systems — serverless introduces distributed debugging complexity |

Our Approach

MW treats serverless as an economic decision, not a religious one. We model the cost of serverless vs. containers vs. reserved instances for your actual traffic pattern (not theoretical), and recommend the option that minimizes total cost of ownership including engineering time for operations. Our serverless architectures include per-function cost attribution (tagging every invocation with the feature that triggered it), cold start monitoring with alerting when P99 exceeds thresholds, and gradual migration playbooks that move one endpoint per sprint. We've migrated monoliths to serverless for media companies, SaaS products, and e-commerce platforms — and in two cases, we've migrated parts back to containers when the workload characteristics changed.

Related Blueprints

- Serverless Microservices Transformation — Full monolith-to-serverless migration strategy

- CI/CD Pipeline Modernization — Deployment pipelines for serverless architectures

- Automated Social Media Video Engine — Event-driven video processing with serverless functions

- AI Podcast Production Suite — Serverless audio processing pipeline

Related Case Studies

- Video Encoding Platform — Serverless video processing with AWS Lambda and Step Functions

- Subscription Management — Serverless webhook processing for multi-platform subscriptions

Related Architecture Patterns

Explore more design patterns and system architectures

Security-First Architecture

Security isn't a feature you add after launch. It's an architectural property — either the system was designed for it, or it wasn't.

On-Off Scaling Architecture

Don't pay for idle GPUs. Provision compute just-in-time, process the workload, and tear it down — turning capital expense into a per-job operating cost.

Edge Computing & IoT Architecture

Process data where it's generated. Not everything needs to round-trip to the cloud — and for many IoT workloads, it can't.

Frequently Asked Questions

Serverless-first works poorly for long-running processes exceeding 15 minutes, workloads requiring persistent WebSocket connections, applications with consistent high-throughput traffic where reserved capacity is cheaper, and systems needing low-level OS or network configuration. MicrocosmWorks evaluates each workload against these constraints during architecture design and recommends hybrid approaches where serverless handles API endpoints and event processing while containers or VMs run the workloads that need persistent compute. This pragmatic approach avoids the common mistake of forcing every component into serverless when it does not fit.

MicrocosmWorks mitigates Lambda cold starts through provisioned concurrency for critical endpoints, function bundle optimization to reduce initialization time, and strategic use of Lambda SnapStart for Java workloads which cuts cold starts from seconds to milliseconds. We also architect applications so that latency-sensitive paths use lightweight runtimes like Node.js or Python with minimal dependencies, keeping cold starts under 200ms even without provisioned concurrency. For endpoints where even that latency is unacceptable, we use Lambda@Edge or CloudFront Functions for sub-10ms responses.

MicrocosmWorks sets up local development environments using tools like SST (Serverless Stack), LocalStack, or the Serverless Framework's offline mode that emulate cloud services on the developer's machine with near-production fidelity. We implement integration test suites that run against ephemeral cloud environments spun up per pull request, so developers can validate against real AWS services without sharing a staging environment. This dual approach gives fast local iteration loops for development while catching cloud-specific issues before code reaches production.

MicrocosmWorks has found that serverless is dramatically cheaper for applications with variable or spiky traffic patterns—often 70-90% less than equivalent always-on container deployments—but the cost advantage narrows at sustained throughputs above 10-20 million invocations per month. We build cost projection models during architecture design that compare serverless per-invocation pricing against reserved container capacity for your specific traffic patterns, including hidden costs like API Gateway charges and data transfer fees. Our optimization service, available at $10-$35/hr consulting rates, regularly reviews serverless billing to identify waste from over-provisioned memory, excessive function durations, or unnecessary API Gateway usage.

MicrocosmWorks uses connection pooling proxies like Amazon RDS Proxy or PgBouncer deployed as a persistent layer between Lambda functions and the database, which multiplexes thousands of Lambda connections into a manageable pool of actual database connections. We also design serverless applications to prefer DynamoDB or other connection-less databases for high-concurrency workloads where connection pooling would still create bottlenecks. For applications that must use relational databases, we implement connection-aware scaling limits that cap concurrent Lambda invocations to match the database's connection capacity.

Need Help Implementing This Architecture?

Our architects can help design and build systems using this pattern for your specific requirements.

Get In Touch