Cloud-Native Infrastructure

Infrastructure that's versioned, tested, and deployed like application code — because your platform is only as reliable as what's underneath it.

When You Need This

Your infrastructure is managed by clicking through cloud consoles. Environment drift between staging and production causes "works on my machine" issues at the infrastructure level. Scaling requires manual intervention, deployments involve SSH-ing into servers, and disaster recovery is a Google Doc that nobody has tested. You need infrastructure that's reproducible, version-controlled, self-healing, and observable — infrastructure that a team can operate without hero knowledge.

Pattern Overview

Cloud-native infrastructure treats infrastructure as code (IaC), runs workloads in containers orchestrated by Kubernetes (or managed equivalents), deploys through GitOps pipelines, and uses managed services where the operational trade-off is favorable. The pattern covers multi-region deployment for availability, horizontal pod autoscaling for elasticity, service mesh for inter-service communication, and comprehensive observability. The goal isn't "running on cloud" — it's building infrastructure that's automated, reproducible, and resilient by default.

Reference Architecture

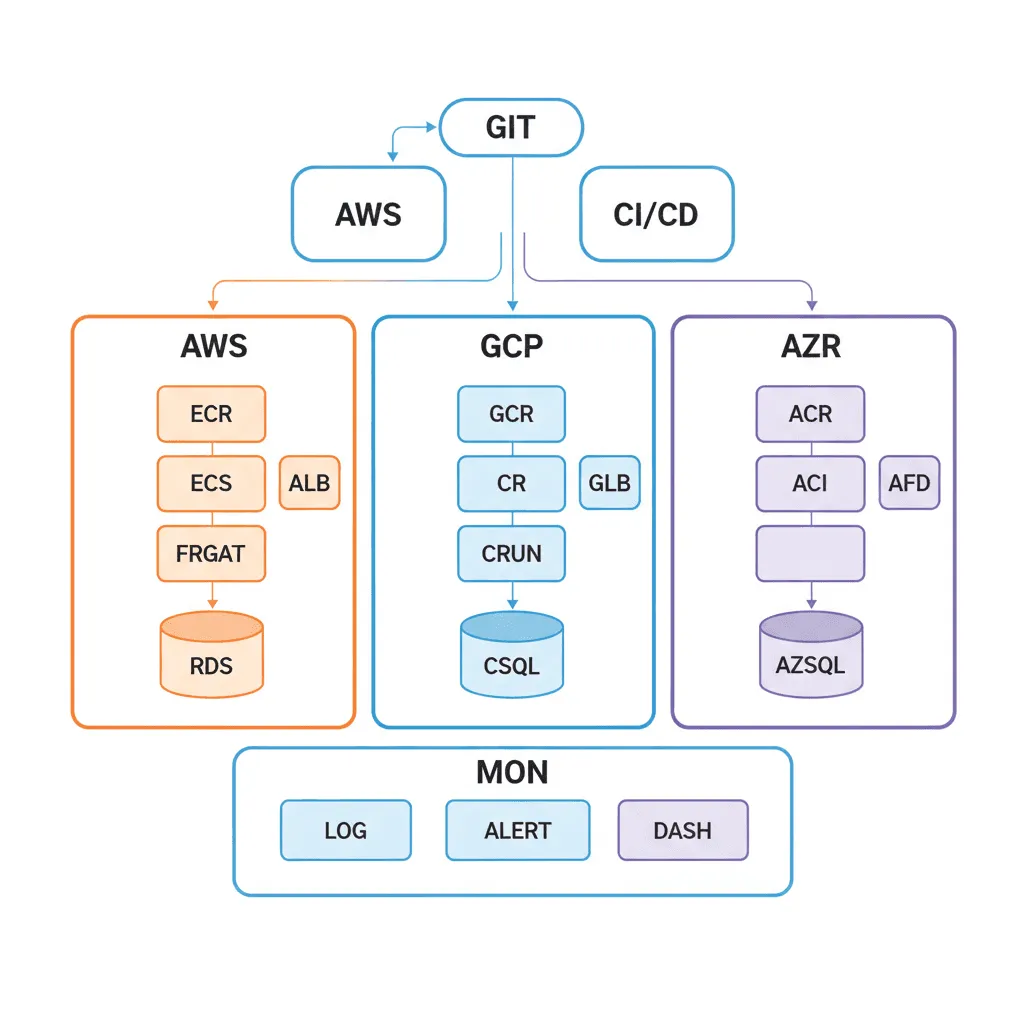

The architecture spans three planes. The control plane manages infrastructure provisioning through Terraform/Pulumi, runs GitOps controllers (ArgoCD/Flux), and handles secrets management (Vault/AWS Secrets Manager). The workload plane runs application containers in Kubernetes clusters (EKS, GKE, or AKS) with pod autoscaling, service mesh (Istio/Linkerd), and ingress management. The observability plane collects metrics (Prometheus), logs (Loki/CloudWatch), traces (Jaeger/Datadog), and alerts (PagerDuty/OpsGenie).

- IaC Foundation: Terraform or Pulumi modules that define every resource — VPCs, subnets, security groups, IAM roles, databases, caches, queues. Modularized by concern (networking, compute, data, observability) with environment-specific variable files

- Kubernetes Cluster: Multi-AZ deployment with node pools sized for workload types (general, compute-optimized, GPU). Namespace-per-environment or namespace-per-team isolation. Pod disruption budgets, resource quotas, and network policies

- GitOps Pipeline: ArgoCD or Flux watches a Git repository for manifests. Application deployments are pull requests — reviewed, approved, and automatically synced. Rollback is a

git revert - Observability Stack: Prometheus + Grafana for metrics, Loki or ELK for logs, Jaeger or Datadog for distributed tracing. SLO-based alerting that pages on customer impact, not resource utilization

Design Decisions & Trade-offs

System Architecture Overview

Technology Choices

| Layer | Technologies |

|---|---|

| Compute | Kubernetes (EKS, GKE, AKS), ECS Fargate, Cloud Run |

| IaC | Terraform, Pulumi, AWS CDK |

| GitOps | ArgoCD, Flux, GitHub Actions |

| Networking | Istio, Linkerd, AWS App Mesh, Nginx Ingress, Cert-Manager |

| Observability | Prometheus, Grafana, Datadog, Loki, Jaeger, PagerDuty |

When to Use / When to Avoid

| Use When | Avoid When |

|---|---|

| Running 5+ services that need independent scaling and deployment | You have a single application that can run on a PaaS (Vercel, Railway, Render) |

| Multiple teams contribute to shared infrastructure | Your team is < 3 engineers — Kubernetes operational burden will dominate |

| You need multi-region deployment for availability or compliance | The project is an MVP that doesn't need HA or complex orchestration |

| Compliance requires reproducible, auditable infrastructure | Cost optimization is critical and the workload fits serverless economics |

Our Approach

MW delivers infrastructure as a product, not a one-time setup. We provide Terraform modules with CI/CD pipelines that plan, review, and apply infrastructure changes through pull requests — the same workflow your developers use for application code. Our Kubernetes deployments include production-grade defaults: pod disruption budgets, resource limits, network policies, and automated certificate rotation. We hand off with operational runbooks, Grafana dashboards, and on-call escalation policies so your team can operate the infrastructure independently.

Related Blueprints

- Cloud Migration & Cost Optimization — Migrating from on-prem or legacy cloud to cloud-native

- Multi-Region High-Availability Architecture — Active-active and active-passive multi-region patterns

- CI/CD Pipeline Modernization — GitOps pipeline design and implementation

- Hybrid Cloud for Regulated Industries — Cloud-native patterns with on-prem compliance constraints

- GPU Cluster Orchestration for AI Workloads — Kubernetes with GPU node pools for ML training

Related Case Studies

- GPU Infrastructure — RunPod and custom GPU cluster orchestration for AI workloads

- Video Encoding Platform — Containerized encoding pipelines with autoscaling

Related Architecture Patterns

Explore more design patterns and system architectures

Security-First Architecture

Security isn't a feature you add after launch. It's an architectural property — either the system was designed for it, or it wasn't.

Serverless-First Architecture

Pay for what you use, scale to zero when you don't, and stop managing servers entirely — but know when the economics stop working.

On-Off Scaling Architecture

Don't pay for idle GPUs. Provision compute just-in-time, process the workload, and tear it down — turning capital expense into a per-job operating cost.

Frequently Asked Questions

Cloud-native means designing applications specifically to exploit cloud capabilities like elastic scaling, managed services, and distributed architecture, rather than simply lifting on-premises applications into virtual machines in the cloud. MicrocosmWorks builds cloud-native systems using containerization, declarative infrastructure-as-code, service meshes, and CI/CD automation that treat infrastructure as ephemeral and replaceable rather than precious and long-lived. The practical difference is that a cloud-native application can scale from 10 to 10,000 users automatically, recover from infrastructure failures without human intervention, and deploy updates dozens of times per day.

MicrocosmWorks recommends Kubernetes for organizations running 10+ microservices that need advanced orchestration features like auto-scaling, rolling deployments, service discovery, and multi-environment consistency, while simpler platforms like AWS ECS, Google Cloud Run, or Azure Container Apps are better for teams with fewer services or limited Kubernetes expertise. We have seen many teams adopt Kubernetes prematurely and spend more time managing the cluster than building features, so we evaluate your actual workload complexity and team maturity before recommending the orchestration layer. Our assessment includes a TCO analysis comparing managed Kubernetes, serverless containers, and platform-as-a-service options for your specific scale.

MicrocosmWorks standardizes on Terraform for multi-cloud infrastructure provisioning and Pulumi for teams that prefer using programming languages like TypeScript or Python instead of HCL, with all infrastructure definitions stored in Git and deployed through the same CI/CD pipeline as application code. We structure IaC repositories into reusable modules for networking, compute, databases, and observability that can be composed into environment-specific configurations, ensuring consistency between development, staging, and production. Every infrastructure change goes through pull request review with automated plan previews that show exactly what resources will be created, modified, or destroyed before any change is applied.

MicrocosmWorks designs cloud-native architectures with an abstraction layer that isolates cloud-specific dependencies behind well-defined interfaces, making it possible to swap providers for individual services without rewriting the entire application. We use portable technologies like Kubernetes, PostgreSQL, Redis, and OpenTelemetry wherever possible, and wrap cloud-specific services like DynamoDB or Cloud Spanner in adapter layers that can be reimplemented for alternative providers. This approach adds minimal overhead during initial development but saves months of migration effort if you later need to move workloads to a different provider or adopt a multi-cloud strategy for compliance or resilience reasons.

A typical cloud-native infrastructure engagement begins with a 2-week assessment where MicrocosmWorks evaluates your current architecture, workloads, and team capabilities, followed by a 4-8 week platform build that delivers the foundational infrastructure including container orchestration, CI/CD pipelines, observability, and security controls. We then run a 4-6 week application migration phase where we containerize and deploy your first 2-3 services onto the new platform with your engineering team embedded alongside ours for hands-on knowledge transfer. Our cloud-native consulting rates range from $10-$40/hr, and the full engagement from assessment through production readiness typically spans 10-16 weeks.

Need Help Implementing This Architecture?

Our architects can help design and build systems using this pattern for your specific requirements.

Get In Touch